GitLab Container Registry administration (FREE SELF)

With the GitLab Container Registry, every project can have its own space to store Docker images.

Read more about the Docker Registry in the Docker documentation.

This document is the administrator's guide. To learn how to use the GitLab Container Registry, see the user documentation.

Enable the Container Registry

Omnibus GitLab installations

If you installed GitLab by using the Omnibus installation package, the Container Registry may or may not be available by default.

The Container Registry is automatically enabled and available on your GitLab domain, port 5050 if:

- You're using the built-in Let's Encrypt integration, and

- You're using GitLab 12.5 or later.

Otherwise, the Container Registry is not enabled. To enable it:

- You can configure it for your GitLab domain, or

- You can configure it for a different domain.

The Container Registry works under HTTPS by default. You can use HTTP but it's not recommended and is beyond the scope of this document. Read the insecure Registry documentation if you want to implement this.

Installations from source

If you have installed GitLab from source:

- You must deploy a registry using the image corresponding to the

version of GitLab you are installing

(for example:

registry.gitlab.com/gitlab-org/build/cng/gitlab-container-registry:v3.15.0-gitlab) - After the installation is complete, to enable it, you must configure the Registry's

settings in

gitlab.yml. - Use the sample NGINX configuration file from under

lib/support/nginx/registry-ssland edit it to match thehost,port, and TLS certificate paths.

The contents of gitlab.yml are:

registry:

enabled: true

host: registry.gitlab.example.com

port: 5005

api_url: http://localhost:5000/

key: config/registry.key

path: shared/registry

issuer: gitlab-issuerWhere:

| Parameter | Description |

|---|---|

enabled |

true or false. Enables the Registry in GitLab. By default this is false. |

host |

The host URL under which the Registry runs and users can use. |

port |

The port the external Registry domain listens on. |

api_url |

The internal API URL under which the Registry is exposed. It defaults to http://localhost:5000. Do not change this unless you are setting up an external Docker registry. |

key |

The private key location that is a pair of Registry's rootcertbundle. Read the token auth configuration documentation. |

path |

This should be the same directory like specified in Registry's rootdirectory. Read the storage configuration documentation. This path needs to be readable by the GitLab user, the web-server user and the Registry user. Read more in #configure-storage-for-the-container-registry. |

issuer |

This should be the same value as configured in Registry's issuer. Read the token auth configuration documentation. |

A Registry init file is not shipped with GitLab if you install it from source. Hence, restarting GitLab does not restart the Registry should you modify its settings. Read the upstream documentation on how to achieve that.

At the absolute minimum, make sure your Registry configuration

has container_registry as the service and https://gitlab.example.com/jwt/auth

as the realm:

auth:

token:

realm: https://gitlab.example.com/jwt/auth

service: container_registry

issuer: gitlab-issuer

rootcertbundle: /root/certs/certbundleWARNING:

If auth is not set up, users can pull Docker images without authentication.

Container Registry domain configuration

There are two ways you can configure the Registry's external domain. Either:

- Use the existing GitLab domain. The Registry listens on a port and reuses the TLS certificate from GitLab.

- Use a completely separate domain with a new TLS certificate for that domain.

Because the Container Registry requires a TLS certificate, cost may be a factor.

Take this into consideration before configuring the Container Registry for the first time.

Configure Container Registry under an existing GitLab domain

If the Registry is configured to use the existing GitLab domain, you can expose the Registry on a port. This way you can reuse the existing GitLab TLS certificate.

If the GitLab domain is https://gitlab.example.com and the port to the outside world is 5050, here is what you need to set

in gitlab.rb or gitlab.yml if you are using Omnibus GitLab or installed

GitLab from source respectively.

Ensure you choose a port different than the one that Registry listens to (5000 by default),

otherwise conflicts occur.

NOTE:

Host and container firewall rules must be configured to allow traffic in through the port listed

under the registry_external_url line, rather than the port listed under

gitlab_rails['registry_port'] (default 5000).

Omnibus GitLab installations

-

Your

/etc/gitlab/gitlab.rbshould contain the Registry URL as well as the path to the existing TLS certificate and key used by GitLab:registry_external_url 'https://gitlab.example.com:5050'The

registry_external_urlis listening on HTTPS under the existing GitLab URL, but on a different port.If your TLS certificate is not in

/etc/gitlab/ssl/gitlab.example.com.crtand key not in/etc/gitlab/ssl/gitlab.example.com.keyuncomment the lines below:registry_nginx['ssl_certificate'] = "/path/to/certificate.pem" registry_nginx['ssl_certificate_key'] = "/path/to/certificate.key" -

Save the file and reconfigure GitLab for the changes to take effect.

-

Validate using:

openssl s_client -showcerts -servername gitlab.example.com -connect gitlab.example.com:5050 > cacert.pem

If your certificate provider provides the CA Bundle certificates, append them to the TLS certificate file.

An administrator may want the container registry listening on an arbitrary port such as 5678.

However, the registry and application server are behind an AWS application load balancer that only

listens on ports 80 and 443. The administrator may remove the port number for

registry_external_url, so HTTP or HTTPS is assumed. Then, the rules apply that map the load

balancer to the registry from ports 80 or 443 to the arbitrary port. This is important if users

rely on the docker login example in the container registry. Here's an example:

registry_external_url 'https://registry-gitlab.example.com'

registry_nginx['redirect_http_to_https'] = true

registry_nginx['listen_port'] = 5678Installations from source

-

Open

/home/git/gitlab/config/gitlab.yml, find theregistryentry and configure it with the following settings:registry: enabled: true host: gitlab.example.com port: 5050 -

Save the file and restart GitLab for the changes to take effect.

-

Make the relevant changes in NGINX as well (domain, port, TLS certificates path).

Users should now be able to sign in to the Container Registry with their GitLab credentials using:

docker login gitlab.example.com:5050Configure Container Registry under its own domain

When the Registry is configured to use its own domain, you need a TLS

certificate for that specific domain (for example, registry.example.com). You might need

a wildcard certificate if hosted under a subdomain of your existing GitLab

domain. For example, *.gitlab.example.com, is a wildcard that matches registry.gitlab.example.com,

and is distinct from *.example.com.

As well as manually generated SSL certificates (explained here), certificates automatically generated by Let's Encrypt are also supported in Omnibus installs.

Let's assume that you want the container Registry to be accessible at

https://registry.gitlab.example.com.

Omnibus GitLab installations

-

Place your TLS certificate and key in

/etc/gitlab/ssl/registry.gitlab.example.com.crtand/etc/gitlab/ssl/registry.gitlab.example.com.keyand make sure they have correct permissions:chmod 600 /etc/gitlab/ssl/registry.gitlab.example.com.* -

After the TLS certificate is in place, edit

/etc/gitlab/gitlab.rbwith:registry_external_url 'https://registry.gitlab.example.com'The

registry_external_urlis listening on HTTPS. -

Save the file and reconfigure GitLab for the changes to take effect.

If you have a wildcard certificate, you must specify the path to the

certificate in addition to the URL, in this case /etc/gitlab/gitlab.rb

looks like:

registry_nginx['ssl_certificate'] = "/etc/gitlab/ssl/certificate.pem"

registry_nginx['ssl_certificate_key'] = "/etc/gitlab/ssl/certificate.key"Installations from source

-

Open

/home/git/gitlab/config/gitlab.yml, find theregistryentry and configure it with the following settings:registry: enabled: true host: registry.gitlab.example.com -

Save the file and restart GitLab for the changes to take effect.

-

Make the relevant changes in NGINX as well (domain, port, TLS certificates path).

Users should now be able to sign in to the Container Registry using their GitLab credentials:

docker login registry.gitlab.example.comDisable Container Registry site-wide

When you disable the Registry by following these steps, you do not remove any existing Docker images. This is handled by the Registry application itself.

Omnibus GitLab

-

Open

/etc/gitlab/gitlab.rband setregistry['enable']tofalse:registry['enable'] = false -

Save the file and reconfigure GitLab for the changes to take effect.

Installations from source

-

Open

/home/git/gitlab/config/gitlab.yml, find theregistryentry and setenabledtofalse:registry: enabled: false -

Save the file and restart GitLab for the changes to take effect.

Disable Container Registry for new projects site-wide

If the Container Registry is enabled, then it should be available on all new projects. To disable this function and let the owners of a project to enable the Container Registry by themselves, follow the steps below.

Omnibus GitLab installations

-

Edit

/etc/gitlab/gitlab.rband add the following line:gitlab_rails['gitlab_default_projects_features_container_registry'] = false -

Save the file and reconfigure GitLab for the changes to take effect.

Installations from source

-

Open

/home/git/gitlab/config/gitlab.yml, find thedefault_projects_featuresentry and configure it so thatcontainer_registryis set tofalse:## Default project features settings default_projects_features: issues: true merge_requests: true wiki: true snippets: false builds: true container_registry: false -

Save the file and restart GitLab for the changes to take effect.

Increase token duration

In GitLab, tokens for the Container Registry expire every five minutes. To increase the token duration:

- On the top bar, select Main menu > Admin.

- On the left sidebar, select Settings > CI/CD.

- Expand Container Registry.

- For the Authorization token duration (minutes), update the value.

- Select Save changes.

Configure storage for the Container Registry

NOTE: For storage backends that support it, you can use object versioning to preserve, retrieve, and restore the non-current versions of every object stored in your buckets. However, this may result in higher storage usage and costs. Due to how the registry operates, image uploads are first stored in a temporary path and then transferred to a final location. For object storage backends, including S3 and GCS, this transfer is achieved with a copy followed by a delete. With object versioning enabled, these deleted temporary upload artifacts are kept as non-current versions, therefore increasing the storage bucket size. To ensure that non-current versions are deleted after a given amount of time, you should configure an object lifecycle policy with your storage provider.

WARNING: Do not directly modify the files or objects stored by the container registry. Anything other than the registry writing or deleting these entries can lead to instance-wide data consistency and instability issues from which recovery may not be possible.

You can configure the Container Registry to use various storage backends by configuring a storage driver. By default the GitLab Container Registry is configured to use the file system driver configuration.

The different supported drivers are:

| Driver | Description |

|---|---|

filesystem |

Uses a path on the local file system |

azure |

Microsoft Azure Blob Storage |

gcs |

Google Cloud Storage |

s3 |

Amazon Simple Storage Service. Be sure to configure your storage bucket with the correct S3 Permission Scopes. |

swift |

OpenStack Swift Object Storage |

oss |

Aliyun OSS |

Although most S3 compatible services (like MinIO) should work with the Container Registry, we only guarantee support for AWS S3. Because we cannot assert the correctness of third-party S3 implementations, we can debug issues, but we cannot patch the registry unless an issue is reproducible against an AWS S3 bucket.

Read more about the individual driver's configuration options in the Docker Registry docs.

Use file system

If you want to store your images on the file system, you can change the storage path for the Container Registry, follow the steps below.

This path is accessible to:

- The user running the Container Registry daemon.

- The user running GitLab.

All GitLab, Registry, and web server users must have access to this directory.

Omnibus GitLab installations

The default location where images are stored in Omnibus, is

/var/opt/gitlab/gitlab-rails/shared/registry. To change it:

-

Edit

/etc/gitlab/gitlab.rb:gitlab_rails['registry_path'] = "/path/to/registry/storage" -

Save the file and reconfigure GitLab for the changes to take effect.

Installations from source

The default location where images are stored in source installations, is

/home/git/gitlab/shared/registry. To change it:

-

Open

/home/git/gitlab/config/gitlab.yml, find theregistryentry and change thepathsetting:registry: path: shared/registry -

Save the file and restart GitLab for the changes to take effect.

Use object storage

If you want to store your images on object storage, you can change the storage driver for the Container Registry.

Read more about using object storage with GitLab.

WARNING: GitLab does not back up Docker images that are not stored on the file system. Enable backups with your object storage provider if desired.

Omnibus GitLab installations

To configure the s3 storage driver in Omnibus:

-

Edit

/etc/gitlab/gitlab.rb:registry['storage'] = { 's3' => { 'accesskey' => 's3-access-key', 'secretkey' => 's3-secret-key-for-access-key', 'bucket' => 'your-s3-bucket', 'region' => 'your-s3-region', 'regionendpoint' => 'your-s3-regionendpoint' } }To avoid using static credentials, use an IAM role and omit

accesskeyandsecretkey. Make sure that your IAM profile follows the permissions documented by Docker.registry['storage'] = { 's3' => { 'bucket' => 'your-s3-bucket', 'region' => 'your-s3-region' } }If using with an AWS S3 VPC endpoint, then set

regionendpointto your VPC endpoint address and setpathstyleto false:registry['storage'] = { 's3' => { 'accesskey' => 's3-access-key', 'secretkey' => 's3-secret-key-for-access-key', 'bucket' => 'your-s3-bucket', 'region' => 'your-s3-region', 'regionendpoint' => 'your-s3-vpc-endpoint', 'pathstyle' => false } }-

regionendpointis only required when configuring an S3 compatible service such as MinIO, or when using an AWS S3 VPC Endpoint. -

your-s3-bucketshould be the name of a bucket that exists, and can't include subdirectories. -

pathstyleshould be set to true to usehost/bucket_name/objectstyle paths instead ofbucket_name.host/object. Set to false for AWS S3.

You can set a rate limit on connections to S3 to avoid 503 errors from the S3 API. To do this, set

maxrequestspersecondto a number within the S3 request rate threshold:registry['storage'] = { 's3' => { 'accesskey' => 's3-access-key', 'secretkey' => 's3-secret-key-for-access-key', 'bucket' => 'your-s3-bucket', 'region' => 'your-s3-region', 'regionendpoint' => 'your-s3-regionendpoint', 'maxrequestspersecond' => 100 } } -

-

Save the file and reconfigure GitLab for the changes to take effect.

Installations from source

Configuring the storage driver is done in the registry configuration YAML file created when you deployed your Docker registry.

s3 storage driver example:

storage:

s3:

accesskey: 's3-access-key' # Not needed if IAM role used

secretkey: 's3-secret-key-for-access-key' # Not needed if IAM role used

bucket: 'your-s3-bucket'

region: 'your-s3-region'

regionendpoint: 'your-s3-regionendpoint'

cache:

blobdescriptor: inmemory

delete:

enabled: trueyour-s3-bucket should be the name of a bucket that exists, and can't include subdirectories.

Migrate to object storage without downtime

WARNING:

Using AWS DataSync

to copy the registry data to or between S3 buckets creates invalid metadata objects in the bucket.

For additional details, see Tags with an empty name.

To move data to and between S3 buckets, the AWS CLI sync operation is recommended.

To migrate storage without stopping the Container Registry, set the Container Registry to read-only mode. On large instances, this may require the Container Registry to be in read-only mode for a while. During this time, you can pull from the Container Registry, but you cannot push.

-

Optional: To reduce the amount of data to be migrated, run the garbage collection tool without downtime.

-

This example uses the

awsCLI. If you haven't configured the CLI before, you have to configure your credentials by runningsudo aws configure. Because a non-administrator user likely can't access the Container Registry folder, ensure you usesudo. To check your credential configuration, runlsto list all buckets.sudo aws --endpoint-url https://your-object-storage-backend.com s3 lsIf you are using AWS as your back end, you do not need the

--endpoint-url. -

Copy initial data to your S3 bucket, for example with the

awsCLIcporsynccommand. Make sure to keep thedockerfolder as the top-level folder inside the bucket.sudo aws --endpoint-url https://your-object-storage-backend.com s3 sync registry s3://mybucketNOTE: If you have a lot of data, you may be able to improve performance by running parallel sync operations.

-

To perform the final data sync, put the Container Registry in

read-onlymode and reconfigure GitLab. -

Sync any changes since the initial data load to your S3 bucket and delete files that exist in the destination bucket but not in the source:

sudo aws --endpoint-url https://your-object-storage-backend.com s3 sync registry s3://mybucket --delete --dryrunAfter verifying the command performs as expected, remove the

--dryrunflag and run the command.WARNING: The

--deleteflag deletes files that exist in the destination but not in the source. If you swap the source and destination, all data in the Registry is deleted. -

Verify all Container Registry files have been uploaded to object storage by looking at the file count returned by these two commands:

sudo find registry -type f | wc -lsudo aws --endpoint-url https://your-object-storage-backend.com s3 ls s3://mybucket --recursive | wc -lThe output of these commands should match, except for the content in the

_uploadsdirectories and sub-directories. -

Configure your registry to use the S3 bucket for storage.

-

For the changes to take effect, set the Registry back to

read-writemode and reconfigure GitLab.

Moving to Azure Object Storage

The default configuration for the storage driver will be changed in GitLab 16.0.

WARNING:

The default configuration for the storage driver will be changed in GitLab 16.0. The storage driver will use / as the default root directory. You can add trimlegacyrootprefix: false to your current configuration now to avoid any disruptions. For more information, see the Container Registry configuration documentation.

When moving from an existing file system or another object storage provider to Azure Object Storage, you must configure the registry to use the standard root directory.

This configuration is done by setting trimlegacyrootprefix: true] in the Azure storage driver section of the registry configuration.

Without this configuration, the Azure storage driver uses // instead of / as the first section of the root path, rendering the migrated images inaccessible.

Omnibus GitLab installations

registry['storage'] = {

'azure' => {

'accountname' => 'accountname',

'accesskey' => 'base64encodedaccountkey',

'container' => 'containername',

'rootdirectory' => '/azure/virtual/container',

'trimlegacyrootprefix' => 'true'

}

}Installations from source

storage:

azure:

accountname: accountname

accountkey: base64encodedaccountkey

container: containername

rootdirectory: /azure/virtual/container

trimlegacyrootprefix: trueDisable redirect for storage driver

By default, users accessing a registry configured with a remote backend are redirected to the default backend for the storage driver. For example, registries can be configured using the s3 storage driver, which redirects requests to a remote S3 bucket to alleviate load on the GitLab server.

However, this behavior is undesirable for registries used by internal hosts that usually can't access public servers. To disable redirects and proxy download, set the disable flag to true as follows. This makes all traffic always go through the Registry service. This results in improved security (less surface attack as the storage backend is not publicly accessible), but worse performance (all traffic is redirected via the service).

Omnibus GitLab installations

-

Edit

/etc/gitlab/gitlab.rb:registry['storage'] = { 's3' => { 'accesskey' => 's3-access-key', 'secretkey' => 's3-secret-key-for-access-key', 'bucket' => 'your-s3-bucket', 'region' => 'your-s3-region', 'regionendpoint' => 'your-s3-regionendpoint' }, 'redirect' => { 'disable' => true } } -

Save the file and reconfigure GitLab for the changes to take effect.

Installations from source

-

Add the

redirectflag to your registry configuration YAML file:storage: s3: accesskey: 'AKIAKIAKI' secretkey: 'secret123' bucket: 'gitlab-registry-bucket-AKIAKIAKI' region: 'your-s3-region' regionendpoint: 'your-s3-regionendpoint' redirect: disable: true cache: blobdescriptor: inmemory delete: enabled: true -

Save the file and restart GitLab for the changes to take effect.

Encrypted S3 buckets

You can use server-side encryption with AWS KMS for S3 buckets that have SSE-S3 or SSE-KMS encryption enabled by default. Customer master keys (CMKs) and SSE-C encryption aren't supported since this requires sending the encryption keys in every request.

For SSE-S3, you must enable the encrypt option in the registry settings. How you do this depends

on how you installed GitLab. Follow the instructions here that match your installation method.

For Omnibus GitLab installations:

-

Edit

/etc/gitlab/gitlab.rb:registry['storage'] = { 's3' => { 'accesskey' => 's3-access-key', 'secretkey' => 's3-secret-key-for-access-key', 'bucket' => 'your-s3-bucket', 'region' => 'your-s3-region', 'regionendpoint' => 'your-s3-regionendpoint', 'encrypt' => true } } -

Save the file and reconfigure GitLab for the changes to take effect.

For installations from source:

-

Edit your registry configuration YAML file:

storage: s3: accesskey: 'AKIAKIAKI' secretkey: 'secret123' bucket: 'gitlab-registry-bucket-AKIAKIAKI' region: 'your-s3-region' regionendpoint: 'your-s3-regionendpoint' encrypt: true -

Save the file and restart GitLab for the changes to take effect.

Storage limitations

Currently, there is no storage limitation, which means a user can upload an infinite amount of Docker images with arbitrary sizes. This setting should be configurable in future releases.

Change the registry's internal port

The Registry server listens on localhost at port 5000 by default,

which is the address for which the Registry server should accept connections.

In the examples below we set the Registry's port to 5010.

Omnibus GitLab

-

Open

/etc/gitlab/gitlab.rband setregistry['registry_http_addr']:registry['registry_http_addr'] = "localhost:5010" -

Save the file and reconfigure GitLab for the changes to take effect.

Installations from source

-

Open the configuration file of your Registry server and edit the

http:addrvalue:http: addr: localhost:5010 -

Save the file and restart the Registry server.

Disable Container Registry per project

If Registry is enabled in your GitLab instance, but you don't need it for your project, you can disable it from your project's settings.

Use an external container registry with GitLab as an auth endpoint

Support for external container registries in GitLab is deprecated in GitLab 15.8 and will be removed in GitLab 16.0.

If you use an external container registry, some features associated with the container registry may be unavailable or have inherent risks.

For the integration to work, the external registry must be configured to use a JSON Web Token to authenticate with GitLab. The external registry's runtime configuration must have the following entries:

auth:

token:

realm: https://gitlab.example.com/jwt/auth

service: container_registry

issuer: gitlab-issuer

rootcertbundle: /root/certs/certbundleWithout these entries, the registry logins cannot authenticate with GitLab.

GitLab also remains unaware of

nested image names

under the project hierarchy, like

registry.example.com/group/project/image-name:tag or

registry.example.com/group/project/my/image-name:tag, and only recognizes

registry.example.com/group/project:tag.

Omnibus GitLab

You can use GitLab as an auth endpoint with an external container registry.

-

Open

/etc/gitlab/gitlab.rband set necessary configurations:gitlab_rails['registry_enabled'] = true gitlab_rails['registry_api_url'] = "https://<external_registry_host>:5000" gitlab_rails['registry_issuer'] = "gitlab-issuer"-

gitlab_rails['registry_enabled'] = trueis needed to enable GitLab Container Registry features and authentication endpoint. The GitLab bundled Container Registry service does not start, even with this enabled. -

gitlab_rails['registry_api_url'] = "http://<external_registry_host>:5000"must be changed to match the host where Registry is installed. It must also specifyhttpsif the external registry is configured to use TLS. Read more on the Docker registry documentation.

-

-

A certificate-key pair is required for GitLab and the external container registry to communicate securely. You need to create a certificate-key pair, configuring the external container registry with the public certificate (

rootcertbundle) and configuring GitLab with the private key. To do that, add the following to/etc/gitlab/gitlab.rb:# registry['internal_key'] should contain the contents of the custom key # file. Line breaks in the key file should be marked using `\n` character # Example: registry['internal_key'] = "---BEGIN RSA PRIVATE KEY---\nMIIEpQIBAA\n" # Optionally define a custom file for Omnibus GitLab to write the contents # of registry['internal_key'] to. gitlab_rails['registry_key_path'] = "/custom/path/to/registry-key.key"Each time reconfigure is executed, the file specified at

registry_key_pathgets populated with the content specified byinternal_key. If no file is specified, Omnibus GitLab defaults it to/var/opt/gitlab/gitlab-rails/etc/gitlab-registry.keyand populates it. -

To change the container registry URL displayed in the GitLab Container Registry pages, set the following configurations:

gitlab_rails['registry_host'] = "registry.gitlab.example.com" gitlab_rails['registry_port'] = "5005" -

Save the file and reconfigure GitLab for the changes to take effect.

Installations from source

-

Open

/home/git/gitlab/config/gitlab.yml, and edit the configuration settings underregistry:## Container Registry registry: enabled: true host: "registry.gitlab.example.com" port: "5005" api_url: "https://<external_registry_host>:5000" path: /var/lib/registry key: /path/to/keyfile issuer: gitlab-issuerRead more about what these parameters mean.

-

Save the file and restart GitLab for the changes to take effect.

Configure Container Registry notifications

You can configure the Container Registry to send webhook notifications in response to events happening within the registry.

Read more about the Container Registry notifications configuration options in the Docker Registry notifications documentation.

You can configure multiple endpoints for the Container Registry.

Omnibus GitLab installations

To configure a notification endpoint in Omnibus:

-

Edit

/etc/gitlab/gitlab.rb:registry['notifications'] = [ { 'name' => 'test_endpoint', 'url' => 'https://gitlab.example.com/notify', 'timeout' => '500ms', 'threshold' => 5, 'backoff' => '1s', 'headers' => { "Authorization" => ["AUTHORIZATION_EXAMPLE_TOKEN"] } } ] -

Save the file and reconfigure GitLab for the changes to take effect.

Installations from source

Configuring the notification endpoint is done in your registry configuration YAML file created when you deployed your Docker registry.

Example:

notifications:

endpoints:

- name: alistener

disabled: false

url: https://my.listener.com/event

headers: <http.Header>

timeout: 500

threshold: 5

backoff: 1000Run the Cleanup policy now

WARNING:

If you're using a distributed architecture and Sidekiq is running on a different node, the cleanup

policies don't work. To fix this, you must configure the gitlab.rb file on the Sidekiq nodes to

point to the correct registry URL and copy the registry.key file to each Sidekiq node. For more

information, see the Sidekiq configuration

page.

To reduce the amount of Container Registry disk space used by a given project, administrators can clean up image tags and run garbage collection.

Registry Disk Space Usage by Project

To find the disk space used by each project, run the following in the GitLab Rails console:

projects_and_size = [["project_id", "creator_id", "registry_size_bytes", "project path"]]

# You need to specify the projects that you want to look through. You can get these in any manner.

projects = Project.last(100)

projects.each do |p|

project_total_size = 0

container_repositories = p.container_repositories

container_repositories.each do |c|

c.tags.each do |t|

project_total_size = project_total_size + t.total_size unless t.total_size.nil?

end

end

if project_total_size > 0

projects_and_size << [p.project_id, p.creator.id, project_total_size, p.full_path]

end

end

# print it as comma separated output

projects_and_size.each do |ps|

puts "%s,%s,%s,%s" % ps

endTo remove image tags by running the cleanup policy, run the following commands in the GitLab Rails console:

# Numeric ID of the project whose container registry should be cleaned up

P = <project_id>

# Numeric ID of a user with Developer, Maintainer, or Owner role for the project

U = <user_id>

# Get required details / objects

user = User.find_by_id(U)

project = Project.find_by_id(P)

policy = ContainerExpirationPolicy.find_by(project_id: P)

# Loop through each container repository

project.container_repositories.find_each do |repo|

puts repo.attributes

# Start the tag cleanup

puts Projects::ContainerRepository::CleanupTagsService.new(container_repository: repo, current_user: user, params: policy.attributes.except("created_at", "updated_at")).execute

endYou can also run cleanup on a schedule.

Container Registry garbage collection

NOTE: Retention policies within your object storage provider, such as Amazon S3 Lifecycle, may prevent objects from being properly deleted.

Container Registry can use considerable amounts of disk space. To clear up some unused layers, the registry includes a garbage collect command.

GitLab offers a set of APIs to manipulate the Container Registry and aid the process of removing unused tags. Currently, this is exposed using the API, but in the future, these controls should migrate to the GitLab interface.

Users who have the Maintainer role for the project can

delete Container Registry tags in bulk

periodically based on their own criteria. However, deleting the tags alone does not recycle data,

it only unlinks tags from manifests and image blobs. To recycle the Container

Registry data in the whole GitLab instance, you can use the built-in garbage collection command

provided by gitlab-ctl.

Prerequisites:

- You must have installed GitLab by using an Omnibus package or the GitLab Helm chart.

- You must set the Registry to read-only mode. Running garbage collection causes downtime for the Container Registry. When you run this command on an instance in an environment where another instance is still writing to the Registry storage, referenced manifests are removed.

Understanding the content-addressable layers

Consider the following example, where you first build the image:

# This builds a image with content of sha256:111111

docker build -t my.registry.com/my.group/my.project:latest .

docker push my.registry.com/my.group/my.project:latestNow, you do overwrite :latest with a new version:

# This builds a image with content of sha256:222222

docker build -t my.registry.com/my.group/my.project:latest .

docker push my.registry.com/my.group/my.project:latestNow, the :latest tag points to manifest of sha256:222222. However, due to

the architecture of registry, this data is still accessible when pulling the

image my.registry.com/my.group/my.project@sha256:111111, even though it is

no longer directly accessible via the :latest tag.

Recycling unused tags

Before you run the built-in command, note the following:

- The built-in command stops the registry before it starts the garbage collection.

- The garbage collect command takes some time to complete, depending on the amount of data that exists.

- If you changed the location of registry configuration file, you must specify its path.

- After the garbage collection is done, the registry should start automatically.

If you did not change the default location of the configuration file, run:

sudo gitlab-ctl registry-garbage-collectThis command takes some time to complete, depending on the amount of layers you have stored.

If you changed the location of the Container Registry config.yml:

sudo gitlab-ctl registry-garbage-collect /path/to/config.ymlYou may also remove all untagged manifests and unreferenced layers, although this is a way more destructive operation, and you should first understand the implications.

Removing untagged manifests and unreferenced layers

WARNING: This is a destructive operation.

The GitLab Container Registry follows the same default workflow as Docker Distribution: retain untagged manifests and all layers, even ones that are not referenced directly. All content can be accessed by using context addressable identifiers.

However, in most workflows, you don't care about untagged manifests and old layers if they are not directly

referenced by a tagged manifest. The registry-garbage-collect command supports the

-m switch to allow you to remove all unreferenced manifests and layers that are

not directly accessible via tag:

sudo gitlab-ctl registry-garbage-collect -mSince this is a way more destructive operation, this behavior is disabled by default. You are likely expecting this way of operation, but before doing that, ensure that you have backed up all registry data.

When the command is used without the -m flag, the Container Registry only removes layers that are not referenced by any manifest, tagged or not.

Performing garbage collection without downtime

You can perform garbage collection without stopping the Container Registry by putting it in read-only mode and by not using the built-in command. On large instances this could require Container Registry to be in read-only mode for a while. During this time, you are able to pull from the Container Registry, but you are not able to push.

By default, the registry storage path

is /var/opt/gitlab/gitlab-rails/shared/registry.

To enable the read-only mode:

-

In

/etc/gitlab/gitlab.rb, specify the read-only mode:registry['storage'] = { 'filesystem' => { 'rootdirectory' => "<your_registry_storage_path>" }, 'maintenance' => { 'readonly' => { 'enabled' => true } } } -

Save and reconfigure GitLab:

sudo gitlab-ctl reconfigureThis command sets the Container Registry into the read-only mode.

-

Next, trigger one of the garbage collect commands:

WARNING: You must use

/opt/gitlab/embedded/bin/registryto recycle unused tags. If you usegitlab-ctl registry-garbage-collect, you will bring the container registry down.# Recycling unused tags sudo /opt/gitlab/embedded/bin/registry garbage-collect /var/opt/gitlab/registry/config.yml # Removing unused layers not referenced by manifests sudo /opt/gitlab/embedded/bin/registry garbage-collect -m /var/opt/gitlab/registry/config.ymlThis command starts the garbage collection, which might take some time to complete.

-

Once done, in

/etc/gitlab/gitlab.rbchange it back to read-write mode:registry['storage'] = { 'filesystem' => { 'rootdirectory' => "<your_registry_storage_path>" }, 'maintenance' => { 'readonly' => { 'enabled' => false } } } -

Save and reconfigure GitLab:

sudo gitlab-ctl reconfigure

Running the garbage collection on schedule

Ideally, you want to run the garbage collection of the registry regularly on a weekly basis at a time when the registry is not being in-use. The simplest way is to add a new crontab job that it runs periodically once a week.

Create a file under /etc/cron.d/registry-garbage-collect:

SHELL=/bin/sh

PATH=/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin

# Run every Sunday at 04:05am

5 4 * * 0 root gitlab-ctl registry-garbage-collectYou may want to add the -m flag to remove untagged manifests and unreferenced layers.

Stop garbage collection

If you anticipate stopping garbage collection, you should manually run garbage collection as described in Performing garbage collection without downtime. You can then stop garbage collection by pressing Control+C.

Otherwise, interrupting gitlab-ctl could leave your registry service in a down state. In this

case, you must find the garbage collection process

itself on the system so that the gitlab-ctl command can bring the registry service back up again.

Also, there's no way to save progress or results during the mark phase of the process. Only once blobs start being deleted is anything permanent done.

Configuring GitLab and Registry to run on separate nodes (Omnibus GitLab)

By default, package assumes that both services are running on the same node. To get GitLab and Registry to run on a separate nodes, separate configuration is necessary for Registry and GitLab.

Configuring Registry

Below you can find configuration options you should set in /etc/gitlab/gitlab.rb,

for Registry to run separately from GitLab:

-

registry['registry_http_addr'], default set programmatically. Needs to be reachable by web server (or LB). -

registry['token_realm'], default set programmatically. Specifies the endpoint to use to perform authentication, usually the GitLab URL. This endpoint needs to be reachable by user. -

registry['http_secret'], random string. A random piece of data used to sign state that may be stored with the client to protect against tampering. -

registry['internal_key'], default automatically generated. Contents of the key that GitLab uses to sign the tokens. They key gets created on the Registry server, but it is not used there. -

gitlab_rails['registry_key_path'], default set programmatically. This is the path whereinternal_keycontents are written to disk. -

registry['internal_certificate'], default automatically generated. Contents of the certificate that GitLab uses to sign the tokens. -

registry['rootcertbundle'], default set programmatically. Path to certificate. This is the path whereinternal_certificatecontents are written to disk. -

registry['health_storagedriver_enabled'], default set programmatically. Configure whether health checks on the configured storage driver are enabled. -

gitlab_rails['registry_issuer'], default value. This setting needs to be set the same between Registry and GitLab.

Configuring GitLab

Below you can find configuration options you should set in /etc/gitlab/gitlab.rb,

for GitLab to run separately from Registry:

-

gitlab_rails['registry_enabled'], must be set totrue. This setting signals to GitLab that it should allow Registry API requests. -

gitlab_rails['registry_api_url'], default set programmatically. This is the Registry URL used internally that users do not need to interact with,registry['registry_http_addr']with scheme. -

gitlab_rails['registry_host'], for example,registry.gitlab.example. Registry endpoint without the scheme, the address that gets shown to the end user. -

gitlab_rails['registry_port']. Registry endpoint port, visible to the end user. -

gitlab_rails['registry_issuer']must match the issuer in the Registry configuration. -

gitlab_rails['registry_key_path'], path to the key that matches the certificate on the Registry side. -

gitlab_rails['internal_key'], contents of the key that GitLab uses to sign the tokens.

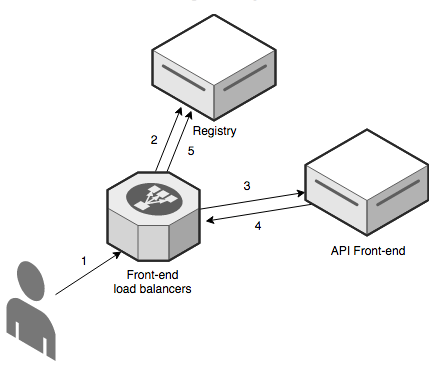

Architecture of GitLab Container Registry

The GitLab registry is what users use to store their own Docker images. Because of that the Registry is client facing, meaning that we expose it directly on the web server (or load balancers, LB for short).

The flow described by the diagram above:

- A user runs

docker login registry.gitlab.exampleon their client. This reaches the web server (or LB) on port 443. - Web server connects to the Registry backend pool (by default, using port 5000). Since the user

didn't provide a valid token, the Registry returns a 401 HTTP code and the URL (

token_realmfrom Registry configuration) where to get one. This points to the GitLab API. - The Docker client then connects to the GitLab API and obtains a token.

- The API signs the token with the registry key and hands it to the Docker client

- The Docker client now logs in again with the token received from the API. It can now push and pull Docker images.

Reference: https://docs.docker.com/registry/spec/auth/token/

Communication between GitLab and Registry

Registry doesn't have a way to authenticate users internally so it relies on GitLab to validate credentials. The connection between Registry and GitLab is TLS encrypted. The key is used by GitLab to sign the tokens while the certificate is used by Registry to validate the signature. By default, a self-signed certificate key pair is generated for all installations. This can be overridden as needed.

GitLab interacts with the Registry using the Registry private key. When a Registry request goes out, a new short-living (10 minutes) namespace limited token is generated and signed with the private key. The Registry then verifies that the signature matches the registry certificate specified in its configuration and allows the operation. GitLab background jobs processing (through Sidekiq) also interacts with Registry. These jobs talk directly to Registry to handle image deletion.

Troubleshooting

Before diving in to the following sections, here's some basic troubleshooting:

-

Check to make sure that the system clock on your Docker client and GitLab server have been synchronized (for example, via NTP).

-

If you are using an S3-backed Registry, double check that the IAM permissions and the S3 credentials (including region) are correct. See the sample IAM policy for more details.

-

Check the Registry logs (for example

/var/log/gitlab/registry/current) and the GitLab production logs for errors (for example/var/log/gitlab/gitlab-rails/production.log). You may be able to find clues there.

Using self-signed certificates with Container Registry

If you're using a self-signed certificate with your Container Registry, you might encounter issues during the CI jobs like the following:

Error response from daemon: Get registry.example.com/v1/users/: x509: certificate signed by unknown authorityThe Docker daemon running the command expects a cert signed by a recognized CA, thus the error above.

While GitLab doesn't support using self-signed certificates with Container

Registry out of the box, it is possible to make it work by

instructing the Docker daemon to trust the self-signed certificates,

mounting the Docker daemon and setting privileged = false in the GitLab Runner

config.toml file. Setting privileged = true takes precedence over the Docker daemon:

[runners.docker]

image = "ruby:2.6"

privileged = false

volumes = ["/var/run/docker.sock:/var/run/docker.sock", "/cache"]Additional information about this: issue 18239.

Docker login attempt fails with: 'token signed by untrusted key'

Registry relies on GitLab to validate credentials If the registry fails to authenticate valid login attempts, you get the following error message:

# docker login gitlab.company.com:4567

Username: user

Password:

Error response from daemon: login attempt to https://gitlab.company.com:4567/v2/ failed with status: 401 UnauthorizedAnd more specifically, this appears in the /var/log/gitlab/registry/current log file:

level=info msg="token signed by untrusted key with ID: "TOKE:NL6Q:7PW6:EXAM:PLET:OKEN:BG27:RCIB:D2S3:EXAM:PLET:OKEN""

level=warning msg="error authorizing context: invalid token" go.version=go1.12.7 http.request.host="gitlab.company.com:4567" http.request.id=74613829-2655-4f96-8991-1c9fe33869b8 http.request.method=GET http.request.remoteaddr=10.72.11.20 http.request.uri="/v2/" http.request.useragent="docker/19.03.2 go/go1.12.8 git-commit/6a30dfc kernel/3.10.0-693.2.2.el7.x86_64 os/linux arch/amd64 UpstreamClient(Docker-Client/19.03.2 \(linux\))"GitLab uses the contents of the certificate key pair's two sides to encrypt the authentication token for the Registry. This message means that those contents do not align.

Check which files are in use:

-

grep -A6 'auth:' /var/opt/gitlab/registry/config.yml## Container Registry Certificate auth: token: realm: https://gitlab.my.net/jwt/auth service: container_registry issuer: omnibus-gitlab-issuer --> rootcertbundle: /var/opt/gitlab/registry/gitlab-registry.crt autoredirect: false -

grep -A9 'Container Registry' /var/opt/gitlab/gitlab-rails/etc/gitlab.yml## Container Registry Key registry: enabled: true host: gitlab.company.com port: 4567 api_url: http://127.0.0.1:5000 # internal address to the registry, is used by GitLab to directly communicate with API path: /var/opt/gitlab/gitlab-rails/shared/registry --> key: /var/opt/gitlab/gitlab-rails/etc/gitlab-registry.key issuer: omnibus-gitlab-issuer notification_secret:

The output of these openssl commands should match, proving that the cert-key pair is a match:

/opt/gitlab/embedded/bin/openssl x509 -noout -modulus -in /var/opt/gitlab/registry/gitlab-registry.crt | /opt/gitlab/embedded/bin/openssl sha256

/opt/gitlab/embedded/bin/openssl rsa -noout -modulus -in /var/opt/gitlab/gitlab-rails/etc/gitlab-registry.key | /opt/gitlab/embedded/bin/openssl sha256If the two pieces of the certificate do not align, remove the files and run gitlab-ctl reconfigure

to regenerate the pair. The pair is recreated using the existing values in /etc/gitlab/gitlab-secrets.json if they exist. To generate a new pair,

delete the registry section in your /etc/gitlab/gitlab-secrets.json before running gitlab-ctl reconfigure.

If you have overridden the automatically generated self-signed pair with

your own certificates and have made sure that their contents align, you can delete the 'registry'

section in your /etc/gitlab/gitlab-secrets.json and run gitlab-ctl reconfigure.

AWS S3 with the GitLab registry error when pushing large images

When using AWS S3 with the GitLab registry, an error may occur when pushing large images. Look in the Registry log for the following error:

level=error msg="response completed with error" err.code=unknown err.detail="unexpected EOF" err.message="unknown error"To resolve the error specify a chunksize value in the Registry configuration.

Start with a value between 25000000 (25 MB) and 50000000 (50 MB).

For Omnibus installations

-

Edit

/etc/gitlab/gitlab.rb:registry['storage'] = { 's3' => { 'accesskey' => 'AKIAKIAKI', 'secretkey' => 'secret123', 'bucket' => 'gitlab-registry-bucket-AKIAKIAKI', 'chunksize' => 25000000 } } -

Save the file and reconfigure GitLab for the changes to take effect.

For installations from source

-

Edit

config/gitlab.yml:storage: s3: accesskey: 'AKIAKIAKI' secretkey: 'secret123' bucket: 'gitlab-registry-bucket-AKIAKIAKI' chunksize: 25000000 -

Save the file and restart GitLab for the changes to take effect.

Supporting older Docker clients

The Docker container registry shipped with GitLab disables the schema1 manifest by default. If you are still using older Docker clients (1.9 or older), you may experience an error pushing images. See omnibus-4145 for more details.

You can add a configuration option for backwards compatibility.

For Omnibus installations

-

Edit

/etc/gitlab/gitlab.rb:registry['compatibility_schema1_enabled'] = true -

Save the file and reconfigure GitLab for the changes to take effect.

For installations from source

-

Edit the YAML configuration file you created when you deployed the registry. Add the following snippet:

compatibility: schema1: enabled: true -

Restart the registry for the changes to take affect.

Docker connection error

A Docker connection error can occur when there are special characters in either the group, project or branch name. Special characters can include:

- Leading underscore

- Trailing hyphen/dash

- Double hyphen/dash

To get around this, you can change the group path, change the project path or change the branch name. Another option is to create a push rule to prevent this at the instance level.

Image push errors

When getting errors or "retrying" loops in an attempt to push an image but docker login works fine,

there is likely an issue with the headers forwarded to the registry by NGINX. The default recommended

NGINX configurations should handle this, but it might occur in custom setups where the SSL is

offloaded to a third party reverse proxy.

This problem was discussed in a Docker project issue and a simple solution would be to enable relative URLs in the Registry.

For Omnibus installations

-

Edit

/etc/gitlab/gitlab.rb:registry['env'] = { "REGISTRY_HTTP_RELATIVEURLS" => true } -

Save the file and reconfigure GitLab for the changes to take effect.

For installations from source

-

Edit the YAML configuration file you created when you deployed the registry. Add the following snippet:

http: relativeurls: true -

Save the file and restart GitLab for the changes to take effect.

Enable the Registry debug server

You can use the Container Registry debug server to diagnose problems. The debug endpoint can monitor metrics and health, as well as do profiling.

WARNING: Sensitive information may be available from the debug endpoint. Access to the debug endpoint must be locked down in a production environment.

The optional debug server can be enabled by setting the registry debug address

in your gitlab.rb configuration.

registry['debug_addr'] = "localhost:5001"After adding the setting, reconfigure GitLab to apply the change.

Use curl to request debug output from the debug server:

curl "localhost:5001/debug/health"

curl "localhost:5001/debug/vars"Access old schema v1 Docker images

Support for the Docker registry API V1, including schema V1 image manifests, was:

It's no longer possible to push or pull v1 images from the GitLab Container Registry.

If you had v1 images in the GitLab Container Registry, but you did not upgrade them (following the steps Docker recommends) ahead of the GitLab 13.9 upgrade, these images are no longer accessible. If you try to pull them, this error appears:

Error response from daemon: manifest invalid: Schema 1 manifest not supported

For Self-Managed GitLab instances, you can regain access to these images by temporarily downgrading

the GitLab Container Registry to a version lower than v3.0.0-gitlab. Follow these steps to regain

access to these images:

- Downgrade the Container Registry to

v2.13.1-gitlab. - Upgrade any v1 images.

- Revert the Container Registry downgrade.

There's no need to put the registry in read-only mode during the image upgrade process. Ensure that

you are not relying on any new feature introduced since v3.0.0-gitlab. Such features are

unavailable during the upgrade process. See the complete registry changelog

for more information.

The following sections provide additional details about each installation method.

Helm chart installations

For Helm chart installations:

- Override the

image.tagconfiguration parameter withv2.13.1-gitlab. - Restart.

- Performing the images upgrade) steps.

- Revert the

image.tagparameter to the previous value.

No other registry configuration changes are required.

Omnibus installations

For Omnibus installations:

-

Temporarily replace the registry binary that ships with GitLab 13.9+ for one prior to

v3.0.0-gitlab. To do so, pull a previous version of the Docker image for the GitLab Container Registry, such asv2.13.1-gitlab. You can then grab theregistrybinary from within this image, located at/bin/registry:id=$(docker create registry.gitlab.com/gitlab-org/build/cng/gitlab-container-registry:v2.13.1-gitlab) docker cp $id:/bin/registry registry-2.13.1-gitlab docker rm $id -

Replace the binary embedded in the Omnibus install, located at

/opt/gitlab/embedded/bin/registry, withregistry-2.13.1-gitlab. Make sure to start by backing up the original binary embedded in Omnibus, and restore it after performing the image upgrade) steps. You should stop the registry service before replacing its binary and start it right after. No registry configuration changes are required.

Source installations

For source installations, locate your registry binary and temporarily replace it with the one

obtained from v3.0.0-gitlab, as explained for Omnibus installations.

Make sure to start by backing up the original registry binary, and restore it after performing the

images upgrade)

steps.

Images upgrade

Follow the steps that Docker recommends to upgrade v1 images. The most straightforward option is to pull those images and push them once again to the registry, using a Docker client version above v1.12. Docker converts images automatically before pushing them to the registry. Once done, all your v1 images should now be available as v2 images.

Tags with an empty name

If using AWS DataSync to copy the registry data to or between S3 buckets, an empty metadata object is created in the root path of each container repository in the destination bucket. This causes the registry to interpret such files as a tag that appears with no name in the GitLab UI and API. For more information, see this issue.

To fix this you can do one of two things:

-

Use the AWS CLI

rmcommand to remove the empty objects from the root of each affected repository. Pay special attention to the trailing/and make sure not to use the--recursiveoption:aws s3 rm s3://<bucket>/docker/registry/v2/repositories/<path to repository>/ -

Use the AWS CLI

synccommand to copy the registry data to a new bucket and configure the registry to use it. This leaves the empty objects behind.

Advanced Troubleshooting

We use a concrete example to illustrate how to diagnose a problem with the S3 setup.

Investigate a cleanup policy

If you're unsure why your cleanup policy did or didn't delete a tag, execute the policy line by line by running the below script from the Rails console. This can help diagnose problems with the policy.

repo = ContainerRepository.find(<project_id>)

policy = repo.project.container_expiration_policy

tags = repo.tags

tags.map(&:name)

tags.reject!(&:latest?)

tags.map(&:name)

regex_delete = ::Gitlab::UntrustedRegexp.new("\\A#{policy.name_regex}\\z")

regex_retain = ::Gitlab::UntrustedRegexp.new("\\A#{policy.name_regex_keep}\\z")

tags.select! { |tag| regex_delete.match?(tag.name) && !regex_retain.match?(tag.name) }

tags.map(&:name)

now = DateTime.current

tags.sort_by! { |tag| tag.created_at || now }.reverse! # Lengthy operation

tags = tags.drop(policy.keep_n)

tags.map(&:name)

older_than_timestamp = ChronicDuration.parse(policy.older_than).seconds.ago

tags.select! { |tag| tag.created_at && tag.created_at < older_than_timestamp }

tags.map(&:name)- The script builds the list of tags to delete (

tags). -

tags.map(&:name)prints a list of tags to remove. This may be a lengthy operation. - After each filter, check the list of

tagsto see if it contains the intended tags to destroy.

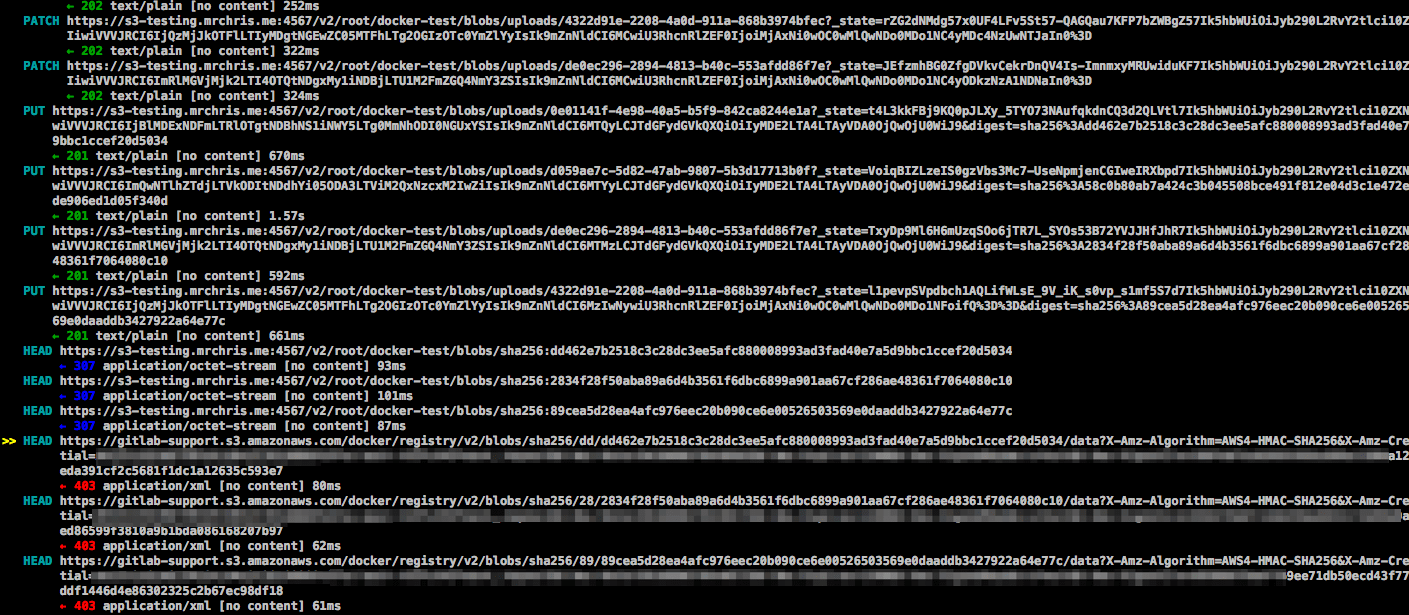

Unexpected 403 error during push

A user attempted to enable an S3-backed Registry. The docker login step went

fine. However, when pushing an image, the output showed:

The push refers to a repository [s3-testing.myregistry.com:5050/root/docker-test/docker-image]

dc5e59c14160: Pushing [==================================================>] 14.85 kB

03c20c1a019a: Pushing [==================================================>] 2.048 kB

a08f14ef632e: Pushing [==================================================>] 2.048 kB

228950524c88: Pushing 2.048 kB

6a8ecde4cc03: Pushing [==> ] 9.901 MB/205.7 MB

5f70bf18a086: Pushing 1.024 kB

737f40e80b7f: Waiting

82b57dbc5385: Waiting

19429b698a22: Waiting

9436069b92a3: Waiting

error parsing HTTP 403 response body: unexpected end of JSON input: ""This error is ambiguous, as it's not clear whether the 403 is coming from the GitLab Rails application, the Docker Registry, or something else. In this case, since we know that since the login succeeded, we probably need to look at the communication between the client and the Registry.

The REST API between the Docker client and Registry is described in the Docker documentation. Normally, one would just use Wireshark or tcpdump to capture the traffic and see where things went wrong. However, since all communications between Docker clients and servers are done over HTTPS, it's a bit difficult to decrypt the traffic quickly even if you know the private key. What can we do instead?

One way would be to disable HTTPS by setting up an insecure Registry. This could introduce a security hole and is only recommended for local testing. If you have a production system and can't or don't want to do this, there is another way: use mitmproxy, which stands for Man-in-the-Middle Proxy.

mitmproxy

mitmproxy allows you to place a proxy between your client and server to inspect all traffic. One wrinkle is that your system needs to trust the mitmproxy SSL certificates for this to work.

The following installation instructions assume you are running Ubuntu:

-

Run

mitmproxy --port 9000to generate its certificates. Enter CTRL-C to quit. -

Install the certificate from

~/.mitmproxyto your system:sudo cp ~/.mitmproxy/mitmproxy-ca-cert.pem /usr/local/share/ca-certificates/mitmproxy-ca-cert.crt sudo update-ca-certificates

If successful, the output should indicate that a certificate was added:

Updating certificates in /etc/ssl/certs... 1 added, 0 removed; done.

Running hooks in /etc/ca-certificates/update.d....done.To verify that the certificates are properly installed, run:

mitmproxy --port 9000This command runs mitmproxy on port 9000. In another window, run:

curl --proxy "http://localhost:9000" "https://httpbin.org/status/200"If everything is set up correctly, information is displayed on the mitmproxy window and no errors are generated by the curl commands.

Running the Docker daemon with a proxy

For Docker to connect through a proxy, you must start the Docker daemon with the

proper environment variables. The easiest way is to shutdown Docker (for example sudo initctl stop docker)

and then run Docker by hand. As root, run:

export HTTP_PROXY="http://localhost:9000"

export HTTPS_PROXY="https://localhost:9000"

docker daemon --debugThis command launches the Docker daemon and proxies all connections through mitmproxy.

Running the Docker client

Now that we have mitmproxy and Docker running, we can attempt to sign in and push a container image. You may need to run as root to do this. For example:

docker login s3-testing.myregistry.com:5050

docker push s3-testing.myregistry.com:5050/root/docker-test/docker-imageIn the example above, we see the following trace on the mitmproxy window:

The above image shows:

- The initial PUT requests went through fine with a 201 status code.

- The 201 redirected the client to the S3 bucket.

- The HEAD request to the AWS bucket reported a 403 Unauthorized.

What does this mean? This strongly suggests that the S3 user does not have the right permissions to perform a HEAD request. The solution: check the IAM permissions again. Once the right permissions were set, the error goes away.

Missing gitlab-registry.key prevents container repository deletion

If you disable your GitLab instance's Container Registry and try to remove a project that has container repositories, the following error occurs:

Errno::ENOENT: No such file or directory @ rb_sysopen - /var/opt/gitlab/gitlab-rails/etc/gitlab-registry.keyIn this case, follow these steps:

-

Temporarily enable the instance-wide setting for the Container Registry in your

gitlab.rb:gitlab_rails['registry_enabled'] = true -

Save the file and reconfigure GitLab for the changes to take effect.

-

Try the removal again.

If you still can't remove the repository using the common methods, you can use the GitLab Rails console to remove the project by force:

# Path to the project you'd like to remove

prj = Project.find_by_full_path(<project_path>)

# The following will delete the project's container registry, so be sure to double-check the path beforehand!

if prj.has_container_registry_tags?

prj.container_repositories.each { |p| p.destroy }

end